How to Run Gemma4 in Your Machine with Ollama

2 minutes read

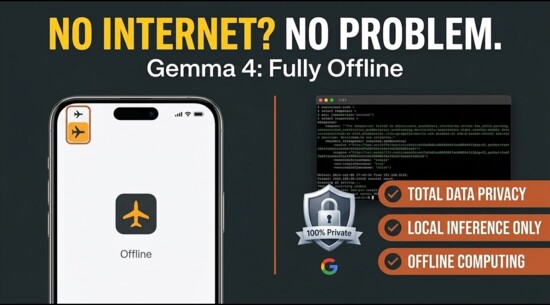

Google’s latest iteration of open-source models, the Gemma series, brings high-performance "open-weight" technology directly to your hardware. By using Ollama, a lightweight and easy-to-use tool for running LLMs locally, you can set up these models in minutes.

Step 1: Install Ollama for Windows

- Navigate to the official Ollama website.

- Download the Windows installer.

- Run the

.exefile. Once installed, Ollama will run in the background, appearing as a small sheep icon in your system tray.

Step 2: Configure Your Environment

Before pulling the model, it is crucial to manage your storage, especially if your C: drive is nearly full.

- Change the Model Storage Location

- By default, Ollama saves models to

C:\Users\<User>\.ollama\models. To move this to a larger drive (e.g., Drive D:)

Step 3: Pull the Model

- Open your terminal (Command Prompt or PowerShell) and run:

ollama run gemma4

(This will download the default 9B version. Usegemma4:e2bfor other sizes.). - Alternatively, you can use a GUI for Ollama (like Page Assist or Open WebUI) to manage downloads via a visual interface.

Step 4: Real-World Testing & Capabilities

The Riddle Test (Reasoning Check)

Gemma is highly capable, but like all models, it can occasionally stumble on "trick" logic.

- Prompt: "June’s father has 3 kids. The eldest is March, the second is April. What is the name of the third child?"

- Observation: In some smaller quantizations, the model might guess "May" because it follows the month pattern. However, the correct answer is June. This highlights the importance of using the 9B or 27B versions for tasks requiring stricter logical grounding.

Vision and Summarization

Gemma is excellent at summarization. If you use the vision-capable variants (like Paligemma), you can feed it images to:

-

Extract text or specific values from tables.

-

Summarize the visual context of a scene.

-

Identify objects with high precision.

Coding Performance

For developers, Gemma is a solid companion for C#, Python, and SQL:

-

Basic Logic: It excels at writing boilerplate code, unit tests, and debugging.

-

Limits: It may struggle with complex, multi-layered animations or highly specialized frameworks without specific prompting. It is best used as a logic assistant rather than a full-scale creative animator.

Setting up Gemma with Ollama gives you a private, offline, and powerful AI. By correctly setting your environment variables and choosing the right model size for your GPU (like an RTX 3070 Ti or similar), you can turn your local machine into a high-performance AI workstation.

Machine Learning Ollama

Author

As the founder and passionate educator behind this platform, I’m dedicated to sharing practical knowledge in programming to help you grow. Whether you’re a beginner exploring Machine Learning, PHP, Laravel, Python, Java, or Android Development, you’ll find tutorials here that are simple, accessible, and easy to understand. My mission is to make learning enjoyable and effective for everyone. Dive in, start learning, and don’t forget to follow along for more tips and insights!. Follow him

Search

Tags

Popular Articles

-

LLM in Action: Article Summarization with LangChain and Ollama

2.34K -

Exploring Large Language Models: Examples, Use Cases, and Applications

1.88K -

Step-by-Step Guide to NLP Basics: Text Preprocessing with Python

1.83K -

Reading an Image in OpenCV using Python - FAQs

1.48K -

Step-by-Step Guide to NLP Basics: Text Preprocessing with Python Part 2

1.29K